How to Improve Accuracy of Automatic Transcription

98% accurate, real-time transcription in just a few clicks. 58 languages and multiple platforms supported.

Speech recognition technology is widely used in smartphones and other services to convert human speech into text. Advanced AI-based voice recognition technologies such as Siri and smart speakers have also become commonplace.

Principles of automatic speech recognition

Automatic speech recognition is a natural language processing technology that converts speech and sound sources into text form; it uses AI algorithms and speech recognition technology to convert speech data into text data. It is used in various fields, including speech recognition, real-time subtitling, and voice assistants.

The accuracy of "Notta" transcription (speech recognition) can reach 98.86%. If you want to further improve the accuracy, or if the accuracy is still low, here are some of the causes and countermeasures. Our research shows that voice recognition is more accurate when the recordings are made in a quiet environment.

Why doesn't speech recognition work?

Speech recognition can go wrong for several reasons.

1. Sound-capturing equipment

Built-in microphone

Built-in microphones are microphones built into portable devices such as smartphones and tablets. Problems with built-in microphones can occur in speech recognition.

Noise effects: The built-in microphone is near the mobile device, so it can pick up the device's electronic noise and vibrations.

Distance and volume: The sound quality depends on the distance between the mouth and the built-in microphone. The farther away they are, the lower and less clear the audio will be.

External microphone

An external microphone is a device that can capture sound from a source or an environment and convert it into an electrical signal. External microphones can be connected to a computer or other devices via USB, Bluetooth, or auxiliary cables. External microphones can offer better audio quality than built-in microphones, but they also come with some challenges and limitations.

Microphone sound collection: Even with an external microphone, you may not get good sound quality if you speak too softly or too far away from it.

Differences in sound quality: Using an external microphone can improve the sound quality over an internal one, but it is not enough. The microphone quality also plays a role in the accuracy. A poor external microphone may disappoint you with its performance.

Ease of use: You can carry it easily, but it requires a constant connection to an external device. The sound collection may suffer if the connection is not stable.

Bluetooth earphones

Bluetooth earphones are wireless earbuds for enjoying music and calls and seamlessly connect to Bluetooth-enabled devices such as music players, smartphones, tablets, laptops, or other devices.

There are several considerations when using Bluetooth earphones.

Sampling rate: Some Bluetooth earphones support only a low sampling rate of 8 kHz. The higher the sampling rate, the more accurate the representation of the original signal. So the sampling rate will also affect the transcription accuracy.

This is not a problem if the Bluetooth earphones you are using support 16 kHz Wide Band Speech (HD Voice) and are recognized as 16 kHz earphones on the device. Sampling rates above 16 kHz are not required for speech recognition.

Other recording facilities

It is recommended to test the recording quality, distance, and microphone directivity before purchasing equipment.

Microphone directivity is a term used to describe how sensitive a microphone is to sound coming from different directions or angles. It depends on which direction the sound is collected from.

Cardioid

The sound collection of the microphone is directional, meaning that it captures sound mostly from the front (usually where the head is located) and hardly from the back. This makes it suitable for recording a single sound source with high quality and low noise.

Omnidirectional

The sound collection of the microphone is omnidirectional, meaning that it receives sound from every direction at the same level. It reproduces the natural sounds of the environment where the microphone is placed. It is suitable for recording ambient sounds.

Bidirectional

The microphone is sensitive to sound coming from the front and the back, but not from the sides. They are used for recording conversations, interviews, performances, and stage audio clearly. The microphone's high sensitivity to both forward and rearward audio allows for clearer recording of the target sound source with little noise from the surroundings.

Supercardioid

This directivity is more focused on the front and rear than cardioid, which helps to reduce noise from those directions. It is useful when there are many sound sources around.

Hypercardioid

It is even more forward-focused than the super-cardioid. This directivity captures sound from a wide range of directions but with a very focused front pickup. It emphasizes the sound from the front more than other directions.

Binaural

Binaural directivity is used to describe how well a listener can perceive the direction of a sound source using both ears. The microphones resemble the shape of the human ear and provide stereophonic and recording. It recreates the three-dimensional sound field, giving the listener a realistic and immersive experience.

2. Recording environment

The recording environment is also an important factor in the improvement of speech recognition. A noisy background can interfere with the recognition accuracy, so a suitable environment for recording can enhance the performance. There are some tips on how to record better audio.

Meeting

When recording audio at a conference, the use of a speakerphone is recommended. A speakerphone is a device that combines a microphone and a speaker.

Speakerphones are useful when multiple participants are talking at the same time, allowing all participants to speak freely and enhancing their communication. They are commonly used and popular in conference rooms and offices.

The greatest advantage of a speakerphone is its ability to collect sound; a PC's built-in microphone is small and lacks sound collection ability, making it inadequate for multi-person meetings. Speakerphones, on the other hand, can capture the voices of people seated far away. In addition, they have features such as automatic volume adjustment, which can help you communicate clearly with people in the meeting.

Interview

There are several tips for recording the audio of an interview.

First, do so in a quiet, low-noise environment and talk close to the microphone. But don't talk too close or your voice will sound weird. Then, before the real interview, do a test recording. Check the level and clarity of the voice, listen to how your voice sounds, and fix any problems. Finally, it is also important to make sure the interviewer speaks clearly.

By following these tips, you will be able to record clear and accurate audio.

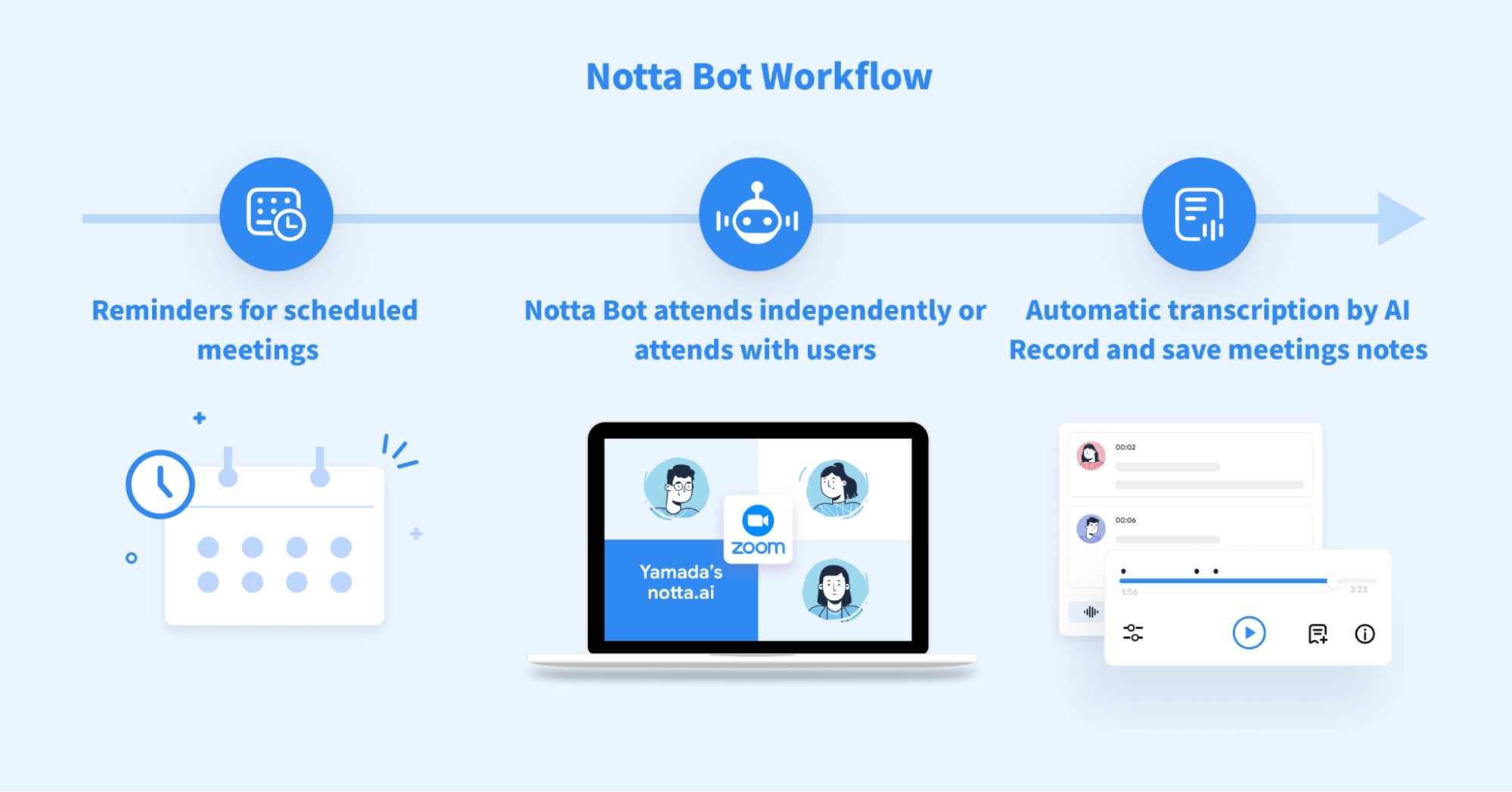

Use Notta Bot to transcribe meetings in real time!

*Notta Bot supports Zoom/Microsoft Teams/Google Meet/Webex meetings.

With Notta Bot, the accuracy of recording and transcription is much higher than that of the default live transcription of Zoom and other web conferences.

You can also have Notta Bot automatically join your meetings by adding events to your Google Calendar. This can further improve efficiency.

After the meeting, you can edit the transcribed content as needed. In addition, meeting summaries can be instantly shared with participants via PC or smartphone.

What's more, you can also share the text conversations in the meeting with people from other time zones or locations by using the translation feature. This way, you can save more time and share information faster.

Notta AI meeting assistant records, transcribes, and summarizes meetings so everyone can stay engaged without missing important details.

3. Recording distance

PC built-in microphones and webcams are not designed for use by multiple people.

Built-in microphones typically have a limited range or directivity of approximately 30 cm to 1 m, making it difficult to accurately record multiple people talking at the same time. Therefore, there should be several precautions that are taken when recording with a built-in microphone for multiple people.

Adjust the appropriate distance between the microphone and the speaker.

If you are too close to the speaker, the audio may be distorted or noisy. On the other hand, if you are too far away, the speaker's voice may be interrupted, making it difficult to hear.

Microphone placement should be based on the speaker's position.

Placing microphones as centrally as possible allows for a more even collection of multiple speakers' voices.

When using the built-in microphone to transcribe a conversation between multiple speakers, the above precautions will help to create an appropriate recording environment for more accurate and easier-to-understand transcription.

However, for higher-quality recordings, the use of an external microphone such as a speakerphone is recommended.

4. Speakers

Although speech recognition technologies are trained to handle a wide variety of speakers, the reality is that speech that is difficult for humans to understand is also difficult for speech recognition AI to recognize. The difficult-to-understand speech has the following characteristics:

Speech that is too fast

The volume is too high or too low.

Accent/dialect

etc.

Characters with similar pronunciations

Speak clearly and naturally, at your normal conversational tone and pace.

By devising these, the quality of the speech data can be improved and the recognition accuracy can be enhanced.

Simultaneous talking by multiple people

When multiple people speak at the same time, individual voices become harder to distinguish, making it more difficult for speech recognizers to recognize them accurately. It also increases ambient noise and interference. These noises and interferences can adversely affect the accuracy of speech recognition. Try to avoid two or more people speaking at the same time as much as possible.

Technical terms and proper nouns

Transcription texts containing technical terms and proper nouns may not be transcribed accurately. These terms are related to specific fields and are difficult to be recognized by speech recognition using only a general dictionary or general knowledge. In such cases, we recommend creating vocabulary in the software and helping train the AI to remember these terms.

Key takeaways

For word errors

Word errors may occur in the converted text file, but if the voice is clear and the sound quality is clear, it is almost always correctly recognized.

For audio with clear pronunciation

The professional announcer speaks with a clear voice and clear sound quality, so the text can be converted to text almost accurately.

For interview or virtual meeting

Because the conversation is not necessarily grammatically correct, some parts may be misspelled, and fillers may be included in the text as they are. Also, if two or more people speak or laugh at the same time, the transcription may not be accurate.

When recording with a microphone

What is spoken into the microphone is converted directly into the text.

Therefore, words that have no meaning in spoken or written text, called fillers, may also be included in the text. Common examples include "uh," "that," "well," "so," and "and so on. Ideally, the distance between the speaker and the microphone should be between 20 cm and 60 cm in order to record high-quality sound.